Apple now actively prototypes harnessing the awesome capabilities of large language models to potentially enhance iPhone functionality in the near future based on AI research presentations at Apple confirming iOS mobile devices may someday host powerful processors enabling next-generation assistant features thanks to chip efficiency breakthroughs.

Let’s decode how Apple steers iPhone evolution integrating smarter LLM neural networks, discuss practical everyday functionality improvementsmanifesting AI advancements on mobile form factors, weigh privacy plus ethical challenges still requiring considerationbefore full rollout.

The Mind-Bending Potential of Large Language Models

So exactly how might large language models meaningfully bolster our iPhone experiences in daily life?

By digesting absolutely massive text, code and visual datasets, LLMs like Google’s PaLM or Anthropic’s Claude attain remarkable comprehension proficiencies allowing seemingly intelligent responses to freeform human prompts spanning:

- Conversational voice assistance

- Personalized memory curation

- Optimized writing enhancements

- Contextual task automation

- Fluid video/photo editing directives

And that merely scratches the surface of dynamically enhancing engagement invoking LLMs as iPhone productivity amplifiers!

Optimizing Large Language Models for Mobile

Traditionally, leveraging LLMs for consumer use cases demanded resource-intensive server farms managing intense computational requirements.

But thanks to Apple’s custom mobile silicon efficiency, their researchers now explore streamlining model architectures maximizing on-device capabilities without cloud offload dependency.

By engineering LLMs codes carefully around iPhone chip strengths, Apple intends unleashing AI everywhere with enough speed for causally invoking Siri without latency lulling engagement.

Everyday Scenarios Enhanced Through On-Device AI

While LLM iPhone integrations likely remain years away, even demo use cases hint tantalizing productivity potential:

- Intelligent Photo Management – Automatic organization/captioning

- Enhanced Health Insights – Personalized medical guidance and terminology translations

- Assisted Smart Home Control – Siri grasps contextual device grouping commands

- Dynamic App Recommendations – Proactively suggest productivity apps fitting user habits

LLMs infusion grants Siri and other iOS services enough intelligence closing gaps between human and machines communications patterns – the next evolution mobilizing AI.

Addressing Responsible AI Concerns Around Large Language Models

However, despite promising productivity possibilities,LLM AI adoption faces responsible innovation challenges requiring transparency around aspects like:

- Eliminating Unconscious Bias – Ensure representative data training

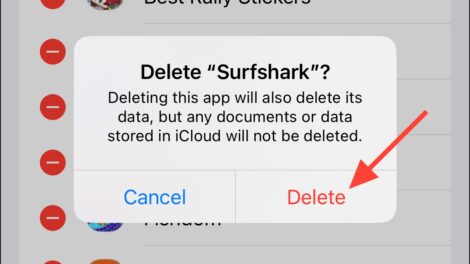

- Protecting Privacy – Local processing without data leakage

- Cybersecurity Vigilance – Harden against external model exploitation

By procedurally addressing ethical AI practices during development, Apple maintains high standards around Denying generative risks eclipsing positives before public deployment.

Vision: Ubiquitous AI Enhancing Lives on Apple Devices

Looking years ahead, LLMs possessing comprehensive digital awareness could catalyze seamless ambient computing environments through data synthesis simplifying tasks we handle manually presently:

- Intelligently automating repetitive routines

- Alerting caregivers responding to senior health patterns

- Optimizing energy consumption across connected appliances

- Curating enhanced media uniquely resonating personal preferences

The peripheries remain boundless as AI brokers relevance removing noise accelerating goals thanks to Apple spearheading responsible denominators ongoing.

Add Comment